Constraint Satisfaction

CSCI 4511/6511

Announcements

- Homework 3 is released

- Working with one partner is optionally permitted

- 20 point bonus if turned in by 4 Mar

- Due 15 Mar

- Midterm Exam on 6 Mar

- Project spec released

Midterm Exam on 6 Mar

- In lecture

- DUQ 359, 12:45 PM

- 100 minutes

- Open note:

- Ten sides1 of handwritten notes permitted

Games

Minimax

- Initial state \(s_0\)

- Actions(\(s\)) and To-move(\(s\))

- Result(\(s, a\))

- Is-Terminal(\(s\))

- Utility(\(s, p\))

Minimax

Games of Luck

- Real-world problems are rarely deterministic

- Non-deterministic state evolution:

- Roll a die to determine next position

- Toss a coin to determine who picks candy first

- Precise trajectory of kicked football1

- Others?

Solving Non-Deterministic Games

Previously: Max and Min alternate turns

Now:

- Max

- Chance

- Min

- Chance

😣

We Played Another Game

- Place 11 pieces of candy between you

- Alternating turns:

- Choose to take one or two pieces

- Except:

- After choosing, flip two coins, record total number of heads1

- If total is divisible by 3, take one less piece than you chose

- If total is divisible by 5, take one more piece than you chose

- If total divisible by 15, take no candy

- Last person to take a piece wins all of the candy

Expectiminimax

- “Expected value” of next position

- How does this impact branching factor of the search?

🫠

Filled With Uncertainty

What is to be done?

- Pruning is still possible

- How?

- Heuristic evaluation functions

- Choose carefully!

Non-Optimal Adversaries

- Is deterministic “best” behavior optimal?

- Are all adversaries rational?

- Expectimax

CSPs

Factored Representation

- Encode relationships between variables and states

- Solve problems with general search algorithms

- Heuristics do not require expert knowledge of problem

- Encoding problem requires expert knowledge of problem1

Why?

Constraint Satisfaction

- Express problem in terms of state variables

- Constrain state variables

- Begin with all variables unassigned

- Progressively assign values to variables

- Assignment of values to state variables that “works:” solution

More Formally

- State variables: \(X_1, X_2, ... , X_n\)

- State variable domains: \(D_1, D_2, ..., D_n\)

- The domain specifies which values are permitted for the state variable

- Domain: set of allowable variables (or permissible range for continuous variables)1

- Some constraints \(C_1, C_2, ..., C_m\) restrict allowable values

Constraint Types

- Unary: restrict single variable

- Can be rolled into domain

- Why even have them?

- Binary: restricts two variables

- Global: restrict “all” variables

Constraint Examples

- \(X_1\) and \(X_2\) both have real domains, i.e. \(X_1, X_2 \in \mathbb{R}\)

- A constraint could be \(X_1 < X_2\)

- \(X_1\) could have domain \(\{\text{red}, \text{green}, \text{blue}\}\) and \(X_2\) could have domain \(\{\text{green}, \text{blue}, \text{orange}\}\)

- A constraint could be \(X_1 \neq X_2\)

- \(X_1, X_2, ..., X_{100} \in \mathbb{R}\)

- Constraint: exactly four of \(X_i\) equal 12

- Rewrite as binary constraint?

Assignments

- Assignments must be to values in each variable’s domain

- Assignment violates constraints?

- Consistency

- All variables assigned?

- Complete

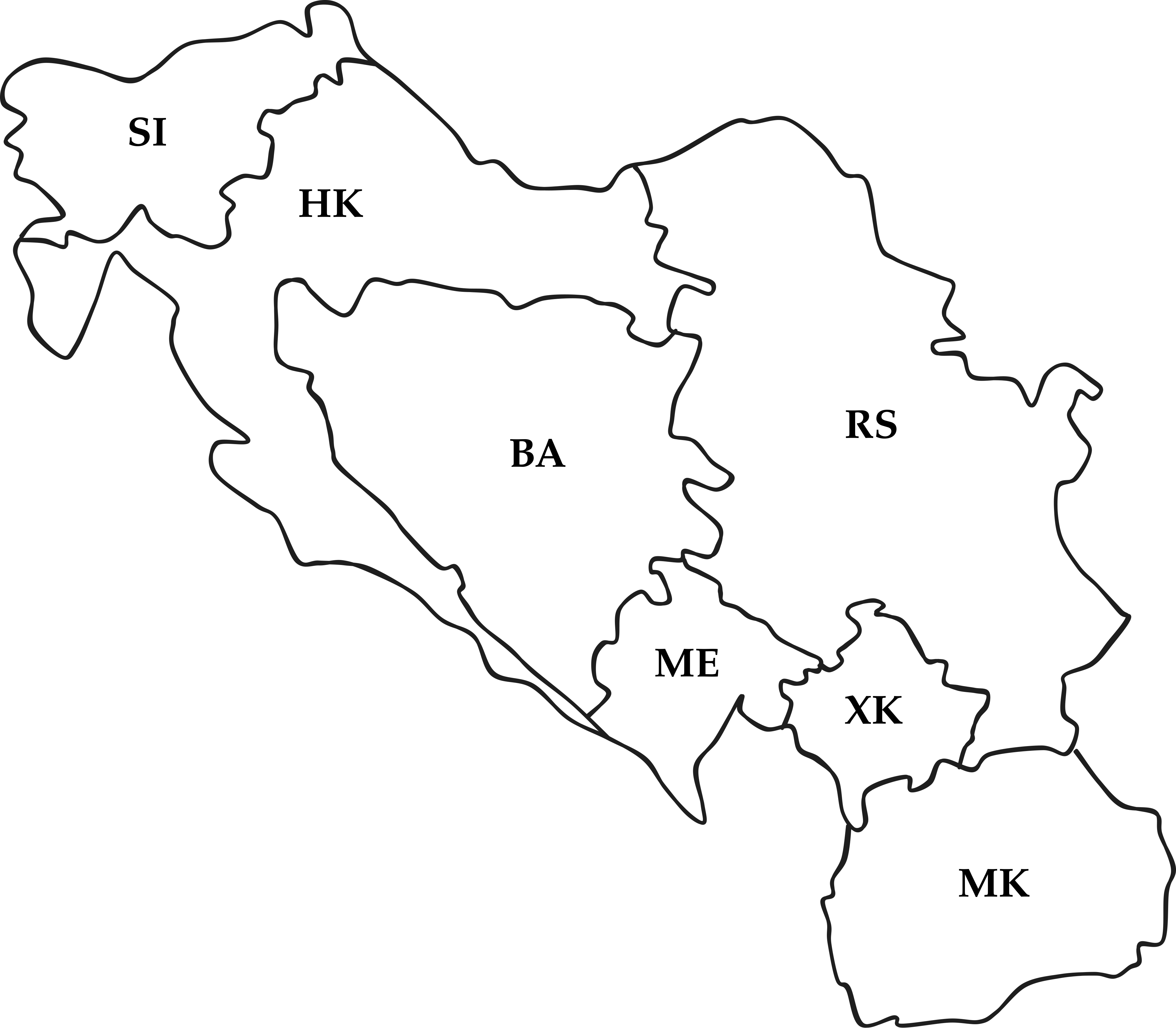

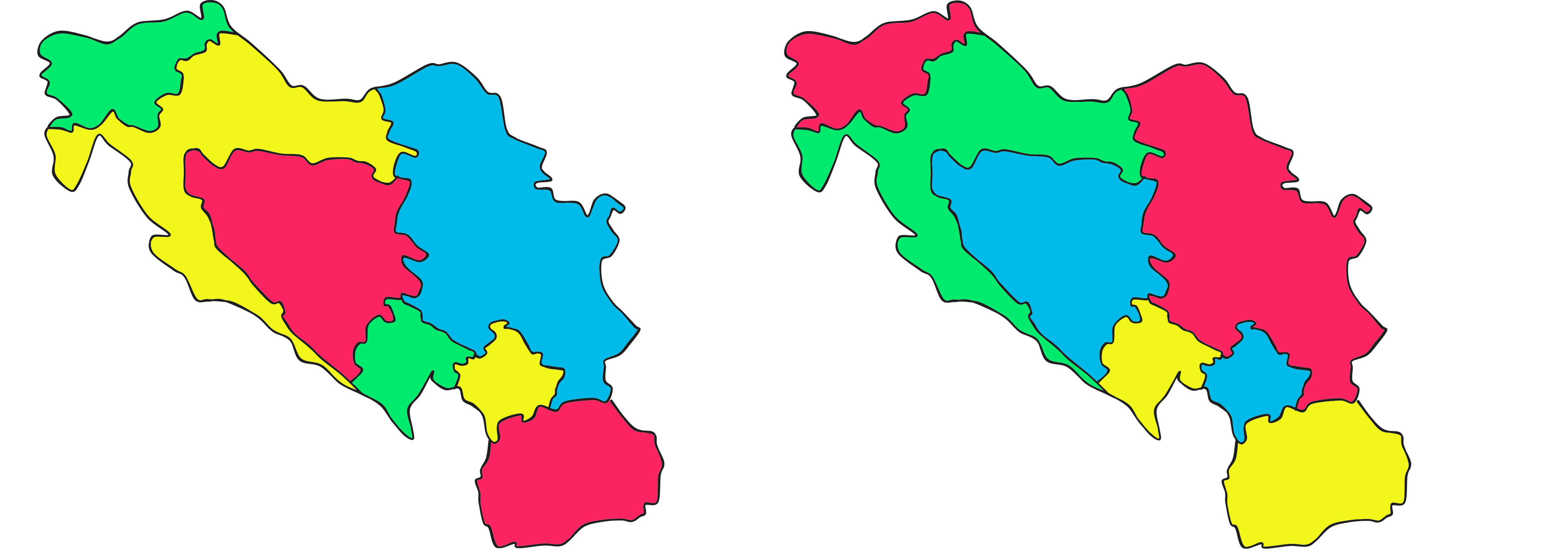

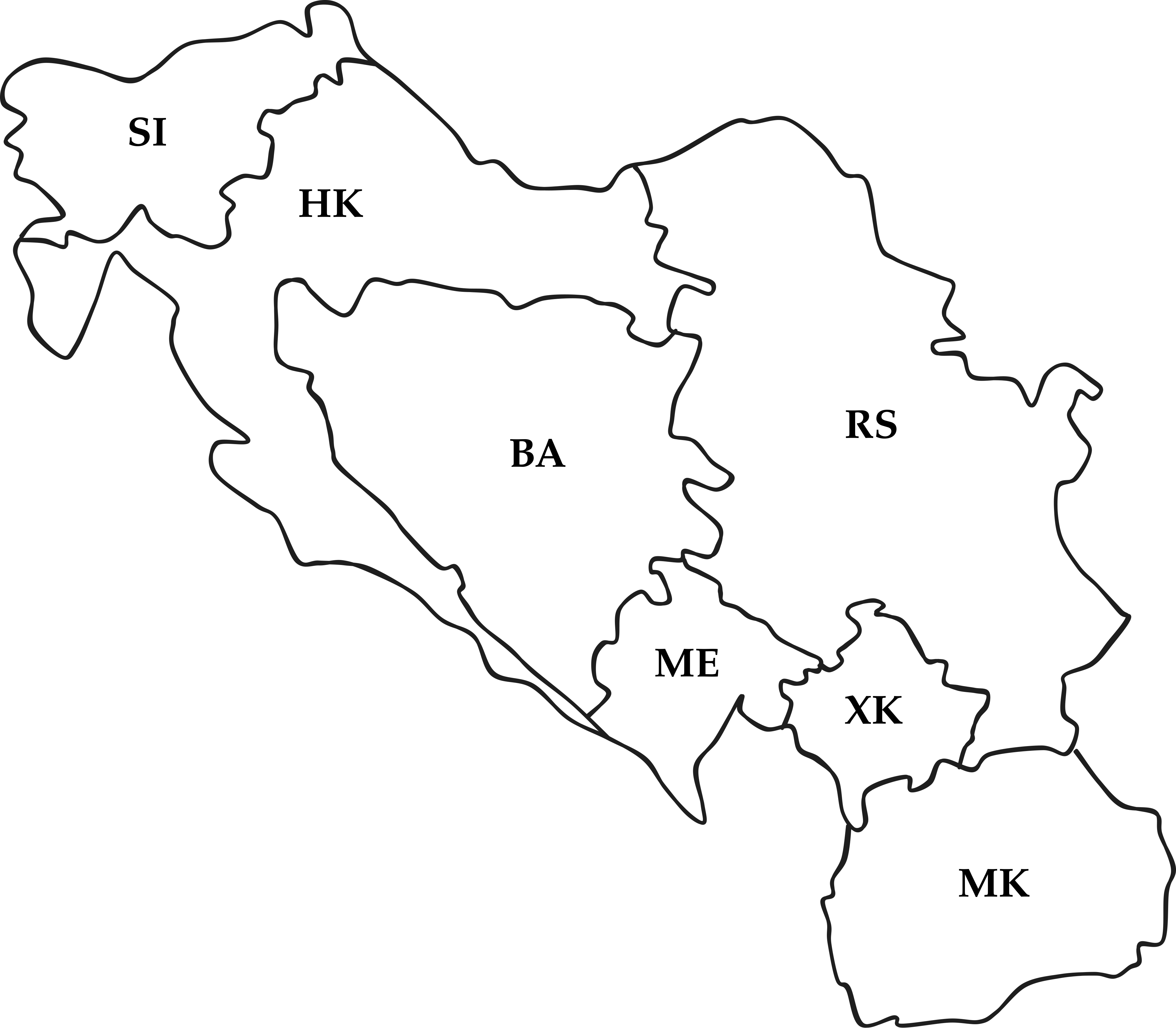

Yugoslavia1

Four-Colorings

Two possibilities:

Formulate as CSP?

Graph Representations

- Constraint graph:

- Nodes are variables

- Edges are constraints

- Constraint hypergraph:

- Variables are nodes

- Constraints are nodes

- Edges show relationship

Why have two different representations?

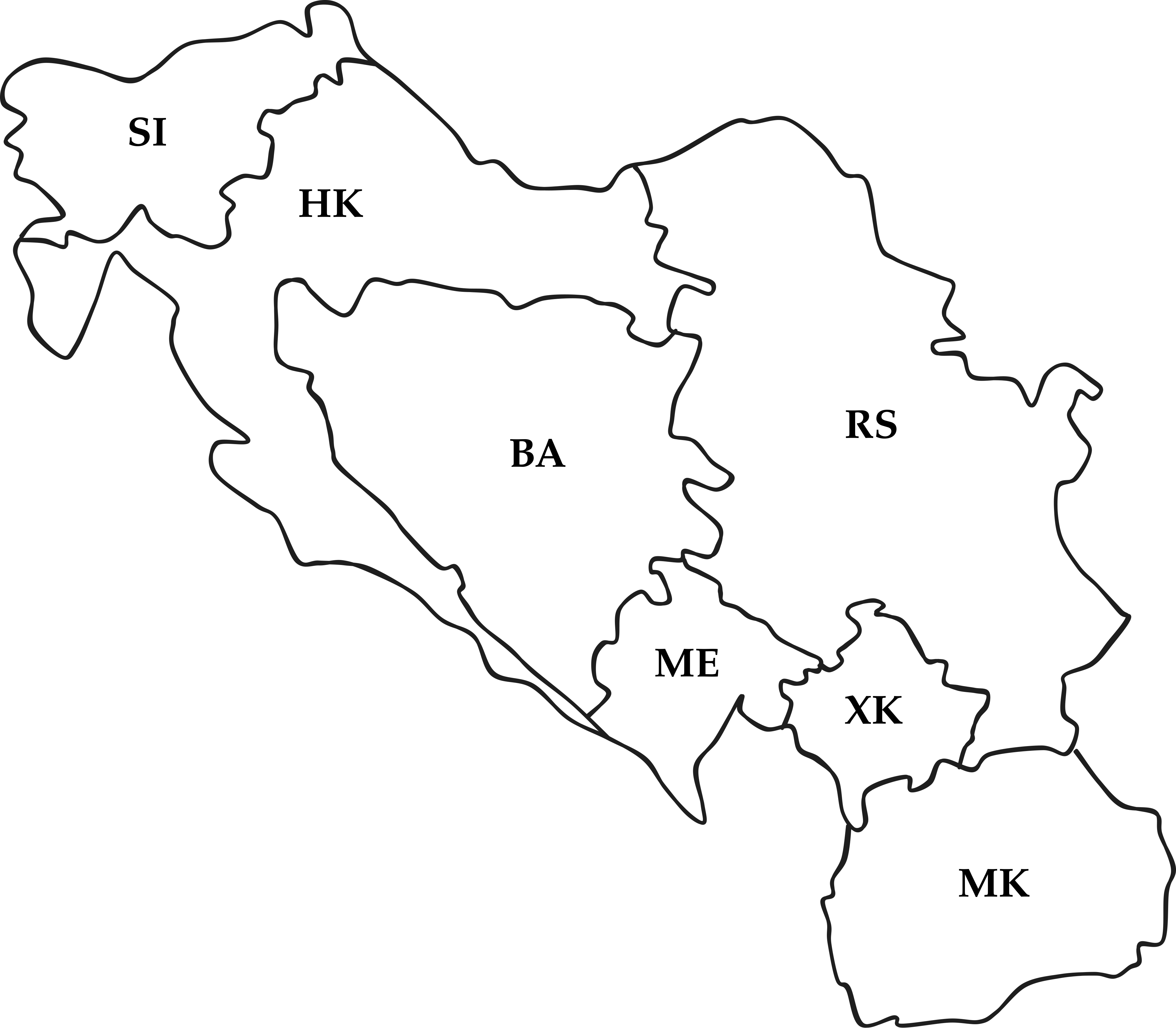

Graph Representation I

Constraint graph: edges are constraints

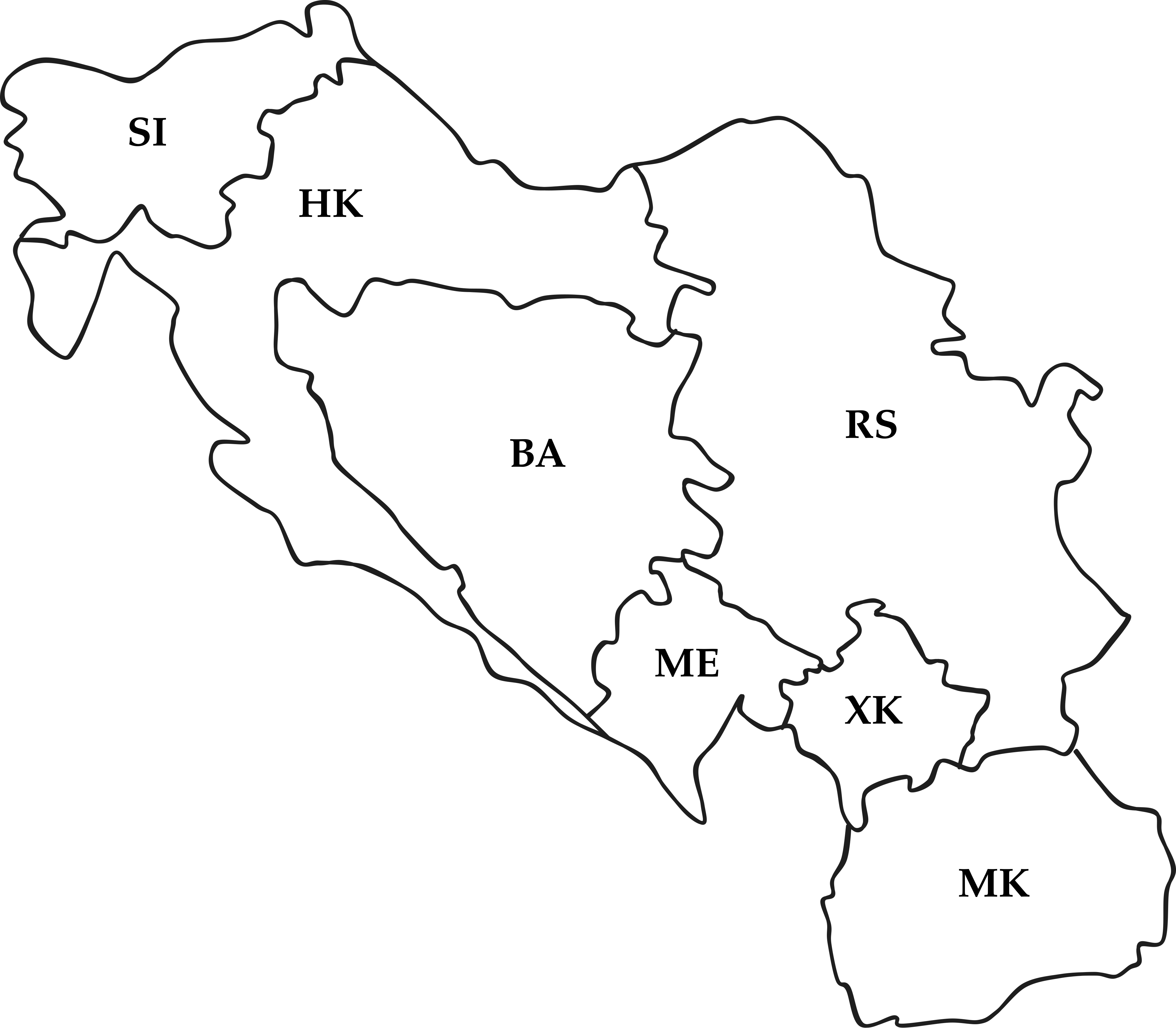

Graph Representation II

Constraint hypergraph: constraints are nodes

How To Solve It

- We can search!

- …the space of consistent assignments

- Complexity \(O(d^n)\)

- Domain size \(d\), number of nodes \(n\)

- Tree search for node assignment

- Inference to reduce domain size

- Recursive search

How To Solve It

What Even Is Inference

- Constraints on one variable restrict others:

- \(X_1 \in \{A, B, C, D\}\) and \(X_2 \in \{A\}\)

- \(X_1 \neq X_2\)

- Inference: \(X_1 \in \{B, C, D\}\)

- If an unassigned variable has no domain…

- Failure

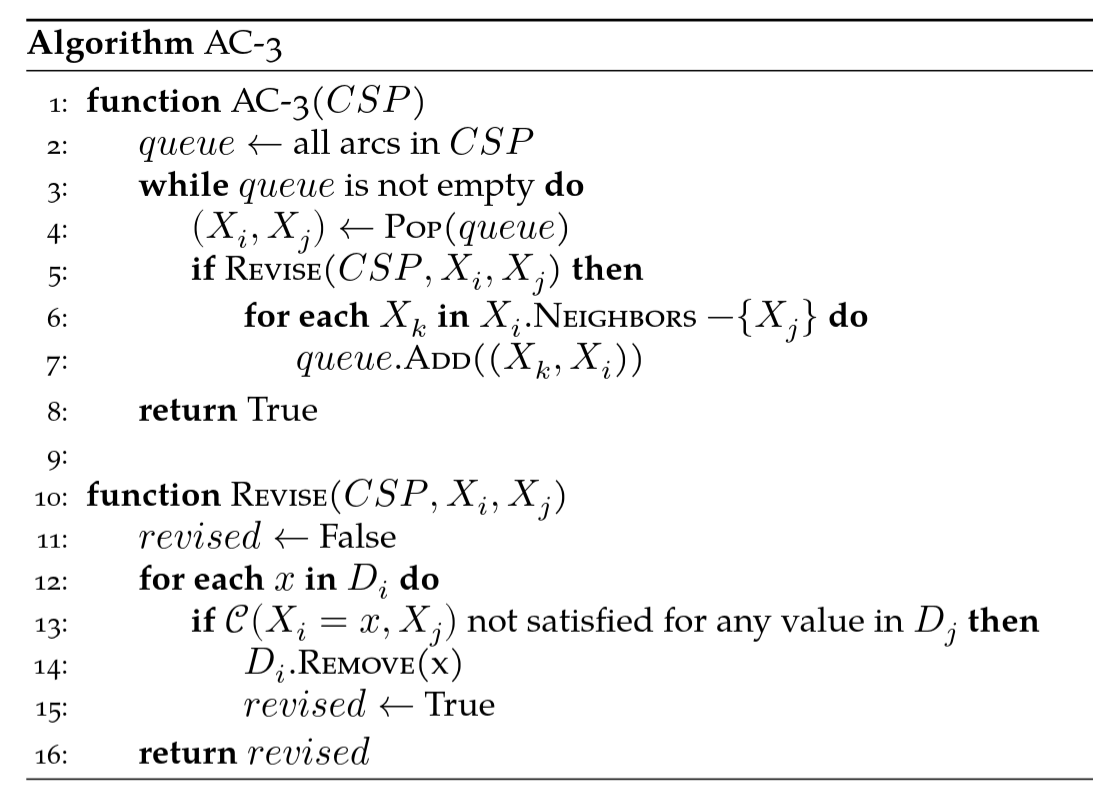

Inference

- Arc consistency

- Reduce domains for pairs of variables

- Path consistency

- Assignment to two variables

- Reduce domain of third variable

AC-3

How To Solve It (Again)

Backtracking search:

- Similar to DFS

- Variables are ordered

- Why?

- Constraints checked each step

- Constraints optionally propagated

How To Solve It (Again)

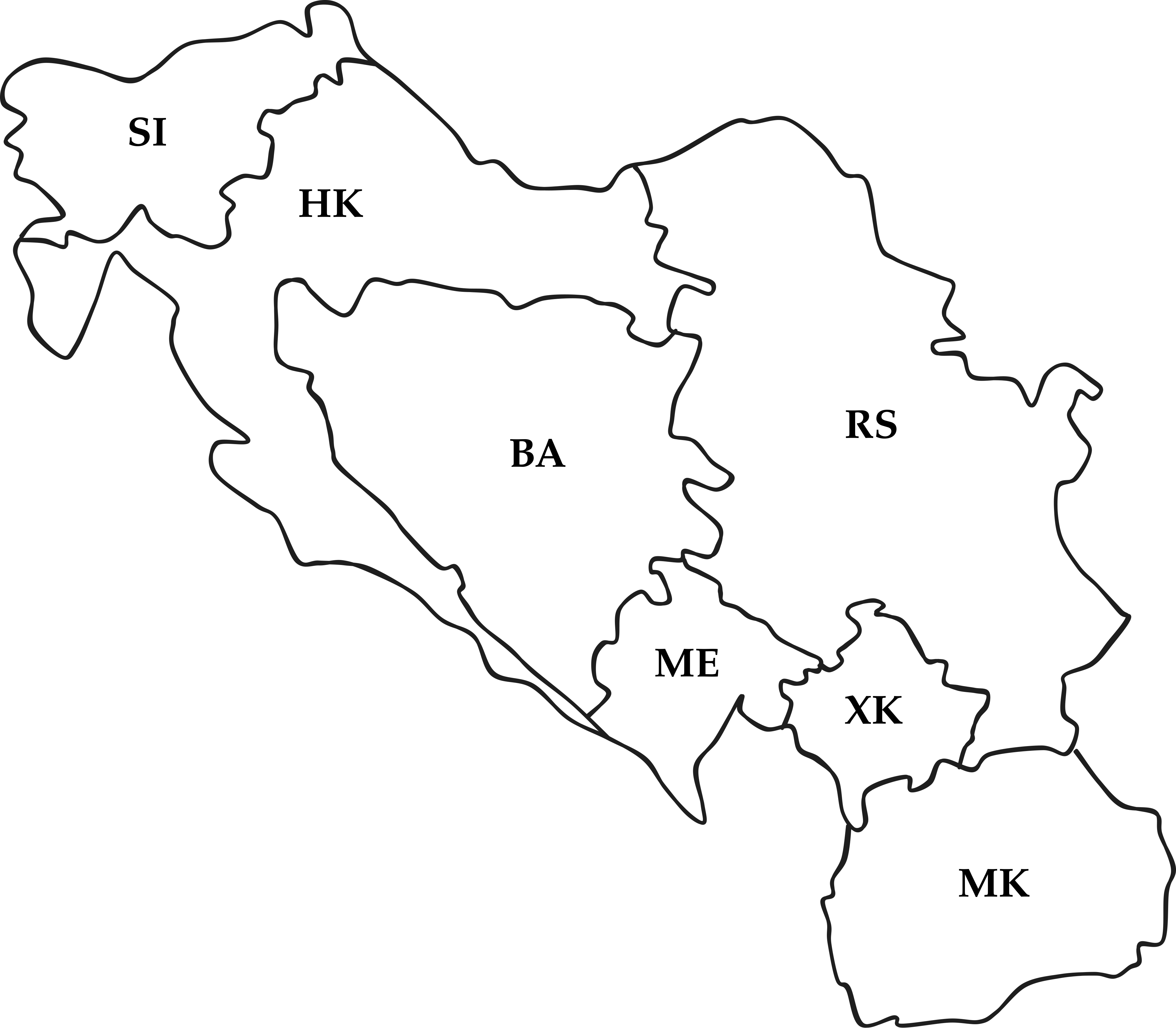

Yugoslav Arc Consistency

Ordering

- Select-Unassigned-Variable(\(CSP, assignment\))

- Choose most-constrained variable1

- Order-Domain-Variables(\(CSP, var, assignment\))

- Least-constraining value

- Why?

Restructuring

Tree-structured CSPs:

Linear time solution

Directional arc consistency: \(X_i \rightarrow X_{i+1}\)

Cutsets

Sub-problems

(Heuristic) Local Search

- Hill climbing

- Random restarts

- Simulated annealing

- Fast?

- Complete?

- Optimal?

Continuous Domains

- Linear:

\[\begin{aligned} \max_{x} \quad & \boldsymbol{c}^T\boldsymbol{x}\\ \textrm{s.t.} \quad & A\boldsymbol{x} \leq \boldsymbol{b}\\ &\boldsymbol{x} \geq 0 \\ \end{aligned}\]

- Convex

\[\begin{aligned} \min_{x} \quad & f(\boldsymbol{x})\\ \textrm{s.t.} \quad & g_i(\boldsymbol{x}) \leq 0\\ & h_i(\boldsymbol{x}) = 0 \\ \end{aligned}\]

Is This Even Relevant in 2026?

- Absolutely yes.

- LLMs are bad at CSPs

- CSPs are common in the real world

- Scheduling

- Optimization

- Dependency solvers

Probability

Randomness and Uncertainty

- We don’t know things about future events

- Someone else might know

- Example: expectimax!

- Ghost could behave randomly

- Ghost could behave according to some plan

- We model behavior as random

The Random Variable

- Uncertain future event: random variable

- Probability:

\[P(x) = \lim_{n \to \infty} \frac{n_x}{n}\]

- Probabilities constrained \(0 \leq P(x) \leq 1\) for any \(x\)

The Random Variable

- In ensemble of events, what fraction represent event \(x\) ?

- What’s troubling about this?

- How do we quantify probability based on observations?

- How do we quantify probability without direct observations?

Plausibility of Statements

- “A is more plausible than B”

- \(P(A) > P(B)\)

- “A is as plausible as B”

- \(P(A) = P(B)\)

- “A is impossible”

- \(P(A) = 0\)

- “A is certain”

- \(P(A) = 1\)

Probability Distribution

- Enumerate possible outcomes1

- Assign probabilities to outcomes

- Distribution: ensemble of outcomes mapped to probabilities

- Works for discrete and continuous cases

Combinatorics

- Enumerating outcomes is a counting problem

- We know how to solve counting problems

- Permutations:

- Ordering \(n\) items: \(n!\)

- Ordering \(n\) items, \(k\) of which are alike: \(\frac{n!}{k!}\)

- … \(k_1\), \(k_2\) of which are alike: \(\frac{n!}{k_1!k_2!}\)

I Am Extremely Sorry

…if you thought this course was going to be about LLMs

Combinatorics

- How many possible outcomes are there?

- How many possible outcomes are there of interest?

- Assume all outcomes have equal probability

- Or don’t

- Divide

- Weight if necessary

Choice

- \(n\) events

- \(k\) are of interest

- \(n-k\) are not of interest

Possible combinations:

\[\binom{n}{k} = \frac{n!}{k!(n-k)!}\]

Bernoulli Trials

- “Single event” that occurs with probability \(\theta\)

- \(P(E) = \theta\)

- \(P(\neg E) = 1 - \theta\)

- Alternate notations:1

- \(P(E^C) = 1 - \theta\)

- \(P(\bar{E}) = 1 - \theta\)

- Examples?

Math Notation

- \(P(E)\)

- Probability of some event \(E\) occuring

- \(P\{X=a\}\)

- Probability of random variable \(X\) taking value \(a\)

- \(p(a)\)

- Probability of random variable taking value \(a\)

Bernoulli Random Variable

- Bernoulli trial:

- Variable, takes one of two values

- Coin toss: \(H\) or \(T\)

- \(P\{X = H\} = \theta\)

- \(P\{X = T\} = 1 - \theta\)

Expected Value

- Variable’s values can be numeric values:

- Coin toss \(H = 8\) and \(T2\)

- \(P\{X = 8\} = \theta\)

- \(P\{X = 2\} = 1 - \theta\)

- Expected value:

- \(E[X] = H \cdot \theta + T \cdot (1-\theta)\)

- \(E[X] = 8 \cdot \theta + 2 \cdot (1-\theta)\)

Expected Value

Of a variable: \[E[X] = \sum_{i=0}^n x_i \cdot p(x_i)\]

Of a function of a variable:

\[E[g(x)] = \sum_{i=0}^n g(x_i) \cdot p(x_i) \neq g(E[X])\]

Variance

- How much do values vary from the expected value?

\[\text{Var}(X) = E[(X - E[X])^2]\]

- \(E[X]\) represents mean, or \(\mu\)

- We’re really interested in \(E[|X-\mu|]\)

- Absolute values are mathematically troublesome

- Standard deviation: \(\sigma\) = \(\sqrt{\text{Var}}\)

Variance

\[\begin{align}\text{Var}(X) & = E[(E[X]-\mu)^2]\\ & = \sum_x (x-\mu)^2 p(x) \\ & = \sum_x (x^2 - 2 x \mu + \mu^2) p(x) \\ & = \sum_x x^2 p(x) - 2 \mu \sum_x x p(x) + \mu^2 \sum_x p(x) \\ & = E[X^2] - 2 \mu \mu + \mu^2 \\ & = E[X^2] - E[X]^2 \end{align}\]

How To Lie With Statistics

References

Stuart J. Russell and Peter Norvig. Artificial Intelligence: A Modern Approach. 4th Edition, 2020.

Mykal Kochenderfer, Tim Wheeler, and Kyle Wray. Algorithms for Decision Making. 1st Edition, 2022.

Stanford CS231

Stanford CS228

UC Berkeley CS188